Making Sense of the AI Alphabet Soup in POCUS

Cristiana Baloescu, MD, MPH

Rachel B. Liu, MD, FACEP

Artificial intelligence (AI) in medicine is expanding rapidly, with the majority of FDA-authorized AI-enabled medical devices currently concentrated in radiology.1 Within emergency medicine, point-of-care ultrasound (POCUS) is quickly becoming a major growth area for advanced image-based algorithms to support image acquisition and bedside decision-making in real time.

At its core, AI refers to computer systems performing tasks that typically require human intelligence—tasks such as recognizing patterns, interpreting images, making predictions, or processing language.2 Within this broad umbrella sit several related concepts that often get blended together.

AI Alphabet Soup

Machine learning (ML) is one major branch of AI and describes models that learn patterns directly from data instead of relying on explicit rules programmed by developers.3

Deep learning (DL), a specialized subset of machine learning, uses multi-layer neural networks modeled loosely after the human brain. This approach has driven most of the recent breakthroughs in image interpretation, which explains why nearly all AI tools currently being developed for POCUS rely on deep learning.3

Computer vision is a subcategory of deep learning that focuses specifically on analyzing images and video and is consequently at the heart of nearly every AI-in-POCUS system.3

Natural language processing (NLP) is a branch of artificial intelligence that enables machines to analyze, interpret, and generate written or spoken language. This is likely the form of AI most commonly encountered in everyday applications.

- Large Language Models (LLM) are AI models trained on gigantic datasets of texts to understand human language. More recently, Vision Language Models (VLLs) integrate LLMs with computer vision, making them particularly relevant to the POCUS workflow by supporting image-aware interpretation, automated reporting, and streamlined clinical communication.4

Generative AI refers to models capable of creating new content such as text or images, as seen in tools like ChatGPT.

Agentic AI refers to systems that act proactively and independently to make decisions and perform tasks requiring minimal oversight.

How AI Learns in POCUS Industry

There are three main traditional approaches to algorithm-based machine learning: supervised, unsupervised, and reinforcement learning. Most POCUS-focused machine learning relies on supervised learning, in which ultrasound experts label training datasets to create “ground truths.” The model then learns to replicate or match the ground truth, such as identifying frames that contain B-lines or tracing the pleural line. In contrast, unsupervised learning analyzes unlabeled data to identify patterns or clusters without expert-defined labels, and reinforcement learning trains an algorithm through trial-and-error interactions, using rewards or penalties to guide decision-making.2

Supervised learning is labor-intensive as it requires expert reviewers and large, well-curated ultrasound datasets.5 The dynamic and operator-dependent nature of POCUS makes dataset standardization more challenging than static imaging modalities like CT or MRI. Additionally, the quality of the ML output is defined by the human expert input. Although labor and data intensive, this approach has created—and will continue to create—opportunities for POCUS experts to collaborate with AI developers to build prospective datasets for training, validation, and testing.

AI in POCUS

POCUS provides dynamic, real-time imaging— the type of data that DL and computer vision are good at analyzing. Challenges such as variable image quality, artifacts, and lack of standardized acquisition protocols also present opportunities for AI to provide support.6 Additionally, the ACEP US Industry Round Table (IRT) members recognize that terminologies used in AI discussions represent different concepts to different audiences. To aid with this, in 2022 they codified domains of AI applicable to the POCUS community: education, clinical, workflow, research and administration.

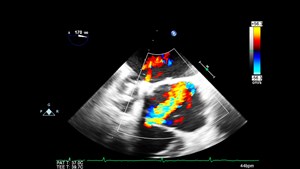

Although many systems remain in development, several AI tools are already commercially available across lung, cardiac, abdominal, musculoskeletal, and vascular applications. The capabilities of these systems can generally be grouped into detection, classification, segmentation, and acquisition support.7 Detection tools identify structures or abnormalities—such as B-lines, ascites, free fluid, or the diaphragm. Classification algorithms help categorize pathology or disease stage, including automated estimation of ejection fraction or severity scoring for pulmonary edema. Segmentation models outline anatomical structures like the pleural line, rib shadows, or left ventricular borders, enabling more accurate quantification. Some newer systems also assist during image acquisition by scoring image quality, triggering auto-capture when optimal views are obtained, guiding probe maneuvers, or automatically labeling anatomy during the scan.8 These features may be valuable for novice users because they offer immediate, standardized feedback without requiring constant real-time supervision.

AI’s impact can extend beyond image interpretation via automated archiving, exam-type recognition, quality improvement feedback, and streamlined documentation. Many of these capabilities are already integrated into commercial systems. Some platforms detect the type of exam based on the probe or image content and automatically populate documentation fields. Others provide real-time quality assessments that historically required manual review by ultrasound faculty. These workflow enhancements could help reduce variability among operators, standardize image quality across a department, and save substantial time on the administrative aspects of a POCUS program.

Although the possibilities of AI in POCUS are expanding quickly, it is important to recognize that most current work still focuses on algorithm development and initial validation rather than real-world implementation. To date, relatively few studies have evaluated whether these tools improve clinical outcomes, streamline workflows, or meaningfully enhance education compared with simply providing more supervised scanning opportunities. As computing power continues to increase and larger, expertly labeled ultrasound datasets become available, AI will almost certainly become woven into everyday POCUS practice for emergency clinicians. However, how these tools are integrated and implemented in real clinical environments still needs much more study to determine whether they truly add value or simply introduce additional steps into an already demanding workflow.

QA Summary Recap

Q: Is AI the same as machine learning?

No. ML is one subset of AI.

Q: Is deep learning the same as machine learning?

Deep learning is a specific type of machine learning using neural networks to “think” more human-like.

Q: Where does computer vision fit?

Computer vision is a deep learning approach focused on image/video interpretation.

Q: How does this apply to POCUS?

AI—particularly computer vision algorithms—can guide novice users, evaluate image quality, and assist in interpretation.

Q: Where does NLP fit in?

NLP tools work with language, not images, and may help with reporting, documentation, and communication.

References

- Artificial Intelligence and Machine Learning (AI/ML)-Enabled Medical Devices. FDA. Published online December 6, 2023. Accessed December 12, 2025. https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-aiml-enabled-medical-devices

- Mueller B, Kinoshita T, Peebles A, Graber MA, Lee S. Artificial intelligence and machine learning in emergency medicine: a narrative review. Acute Med Surg. 2022;9(1):e740.

- Chartrand G, Cheng PM, Vorontsov E, Drozdzal M, Turcotte S, Pal CJ, et al. Deep Learning: A Primer for Radiologists. Radiographics. 2017;37(7):2113-31.

- Ryu JS, Kang H, Chu Y, Yang S. Vision-language foundation models for medical imaging: a review of current practices and innovations. Biomed Eng Lett. 2025;15(5):809-30.

- Shokoohi H, LeSaux MA, Roohani YH, Liteplo A, Huang C, Blaivas M. Enhanced Point-of-Care Ultrasound Applications by Integrating Automated Feature-Learning Systems Using Deep Learning. J Ultrasound Med. 2019;38(7):1887-97.

- Park SH. Artificial intelligence for ultrasonography: unique opportunities and challenges. Ultrasonography. 2021;40(1):3-6.

- Sonko ML, Arnold TC, Kuznetsov IA. Machine Learning in Point of Care Ultrasound. POCUS J. 2022 Feb 1;7(Kidney):78-87.

- Baloescu C, Bailitz J, Cheema B, et al. Artificial Intelligence–Guided Lung Ultrasound by Nonexperts. JAMA Cardiol. 2025;10(3):245-53.